Perthblog

Is AI too left-brained to ensure global survival?

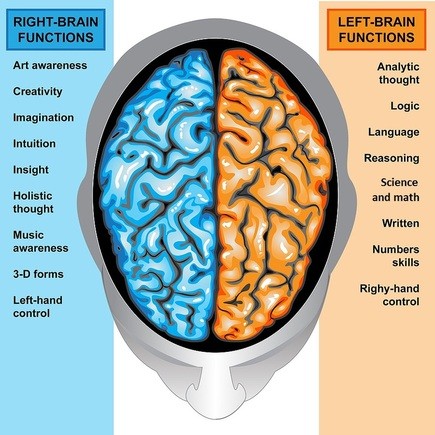

I’m thinking that you think you have a brain. Nope. Actually, you have two. We all have two brains. As in two hemispheres, the left and the right. They both do very different things, although there is some overlap. The left hemisphere does logic, process, rules and analysis. The right does envisioning, emotion, empathy and social stuff. So what?

In our modern times, the left brain has become the dominant one. We humans are all about analysis, logic and rules. Digital and computer stuff is all left-brain. That’s got us to a digital age with videogames, smartphones and apps. What’s not to like? A lot: see this book The Master and His Emissary: The Divided Brain and the Making of the Western World (by author Iain McGilchrist).

You might wonder why computer stuff and videogames are so terribly bad. Ever heard about algorithms? They are the Holy Grail of AI. And we know that AI is great, right?

What is the objective of AI? It’s not just about automating things. It’s about achieving the Singularity, that is when human intelligence merges with machines. For the secular amongst us, this is humans gaining God-like powers. And it’s the left-brain that is doing it!

But wait; the left-brain is, above all, transactional. That’s another way of saying it has a short-term and utilitarian focus. It’s not into unintended consequences, happiness, or the health of the planet. It’s about having short-term objectives like profit, automation, utility, clever, even super-smart gadgets and machines. But it isn’t about making the world a much better place for all of us and the countless succeeding generations that should rightly follow us. For AI, we humans have no responsibility for the generations that follow us.

Let’s check out where AI is getting us so far. Earth-wide pollution – did you notice that South Asia has the heaviest air pollution on Earth? To be added to China and the African countries of course, not to mention the developed world. How about filthy water, plastic omni-present, poisoned soils? Climate change? Melting of glaciers and the Antarctic ice-shelf? The disappearance of natural habitats and of whole species, including everything from insects to the almost total disappearance of large predators?

And that’s just the natural stuff. What about the imminent threat of nuclear war, rapidly spreading ultra-high rates of drug abuse, mental health problems, suicide and so on? How about global systems of governance which are clearly breaking down, even in the developed countries? The constant threats from cyber-attacks, hacking, accidental war and so on?

Left-brain thinking leads us to focus on machine learning, smart algorithms, deep learning, raw logic, making machines faster, smarter and more obedient. It’s about increasing the IQ of machines. It’s not about increasing wisdom and leaving a healthy habitat for the generations that should follow us but almost certainly won’t exist to tell the tale.

We select programmers and coders and other AI practitioners for their competency in writing good code, figuring out smart algorithms and the like. But none of them is selected for their right-brain strengths; usually these are a huge disadvantage. We don’t want AI types to dwell on the significance of what they are doing, because that might upset the applecart.

AI is about optimizing the short-term. Left-brain thinking is digging us into an even bigger hole. The right-brain virtues of social collaboration and harmony, empathy, vision and global happiness have been largely abandoned by AI because of its utilitarian, transactional and short-term bias. That’s an old world that has disappeared. Maybe right-brain thinking could make religion stronger. What a disaster!

The more thoughtful amongst us now believe that the future of the Earth as a healthy human habitat is deeply in doubt. The betting now is not how long human civilization will last, but when it will end. Stephen Hawking predicted 100 years. He also believed that AI would hasten the end for humans. I would say that even his belief of 100 years is a leap of faith given all the dystopian trends at work in the natural, technological and political environment currently existing on Earth.

It is increasingly looking like AI is part of the problem, rather than being part of the solution. The Singularity is looking much less God-like and more like Armageddon. We’re hurtling there at a speed that we haven’t digested yet. We have been deceived into thinking that AI is the ticket to beating the odds against the survival of humans and life on Earth rather than a one-way ticket to self-destruction.

Maybe there’s still time. But this presupposes wisdom on the part of human leaders which isn’t readily visible, anywhere on the planet.

Climate change is only a tiny part of the problem. A movement to make AI more right-brained is one way to fix the problem.

If it isn’t already far too late.

When you subscribe to the blog, we will send you an e-mail when there are new updates on the site so you wouldn't miss them.